The Best vivid Sora 2 and Veo 3 for Free

We live in a noisy world. Not just auditory noise, but visual noise.

Every day, we scroll through miles of content on our screens. A blur of faces, landscapes, and products passes by our thumbs. In this relentless stream of information, the static image—once the king of media—is beginning to fade into the background. It is becoming invisible.

Why? Because the human eye is a predator; it is evolutionarily hardwired to detect motion. Movement signals life. Movement signals danger. Movement signals story.

When you post a static photo today, you are asking your audience to pause and imagine the context. When you post a video, you are doing the work for them. You are inviting them into a living world.

The “Engagement Gap”

I realized this painful truth recently while managing a social media campaign for a small coffee brand. We had stunning photography: steam rising from a fresh brew, sunlight hitting the ceramic mug, coffee beans scattered artistically. They were beautiful photos.

And they were being ignored.

The engagement was flat. The algorithm punished us. We were shouting into a void because our visuals, while pretty, were dead. We needed video, but we didn’t have the budget for a videographer, and I certainly didn’t have the time to learn complex animation software.

Breaking the “Video Barrier”

This is the dilemma millions of creators face: The Video Barrier.

On one hand, you have the ease of photography. On the other, the high engagement of video. Bridging that gap usually requires expensive gear, powerful computers, and a steep learning curve.

Or at least, it used to.

I discovered a solution that didn’t just bridge the gap—it dismantled it completely. It wasn’t about shooting video; it was about waking up the photos I already had. This solution is Image to Video AI.

A New Kind of Magic

My first experiment was simple. I took one of those coffee photos—the one with the steam. I uploaded it, typed a simple command, and waited.

What came back wasn’t a cheesy filter. It was a cinemagraph. The steam swirled with chaotic elegance, fading naturally into the air. The sunlight on the table seemed to shimmer slightly as if a tree branch outside was swaying in the wind.

It was hypnotic. I posted it, and the engagement didn’t just double; it tripled. People stopped scrolling because the image felt alive.

The Tech Stack: Sora 2 & Veo 3.1

What makes this possible isn’t magic, though it feels like it. It’s the convergence of two titans in the AI space: Sora 2 and Veo 3.1.

To understand why this platform is superior to the “gimmicky” animation apps of the past, you have to understand the roles these two models play. Think of them as the Architect and the Artist.

Sora 2: The Narrative Architect

Sora 2 is the language model that understands intent.

- Deep Understanding: If you upload a picture of a campfire and type “crackling fire,” Sora 2 knows that fire implies flickering light on the surrounding faces. It understands the scene, not just the pixels.

- Creative Freedom: It allows you to add elements that aren’t there. Want to add falling snow to a sunny street? Sora 2 understands how to integrate that new element seamlessly.

Veo 3.1: The Visual Artist

Veo 3.1 is the engine that understands physics.

- Motion Integrity: It ensures that water flows downhill, that hair blows in the direction of the wind, and that shadows stretch correctly.

- High-Definition Realism: Veo 3.1 eliminates the “warping” effect where faces distort when they move. It maintains the integrity of the original subject while animating the world around it.

The ROI of Motion: A Comparative Look

Why should you switch from static images or traditional video editing to AI-generated video? Let’s look at the Return on Investment (ROI) in terms of time, money, and impact.

The Visual Content Hierarchy

| Metric | Static Photography | Traditional Video Production | Image to Video AI |

| Production Time | Instant (Snap & Post) | Days (Shoot, Edit, Render) | Seconds (Upload & Prompt) |

| Cost | Low | High (Gear + Talent) | Free / Low Cost |

| Viewer Retention | Low (< 2 seconds) | High (if quality is good) | Very High (The “Wow” Factor) |

| Technical Barrier | Low | Very High | None (Text Prompts) |

| Reusability | One-time use | Hard to re-edit | Infinite Variations |

| Viral Potential | Low | High | High |

Three Ways to Transform Your Digital Presence

Whether you are a business owner, an influencer, or a digital artist, this tool unlocks specific superpowers.

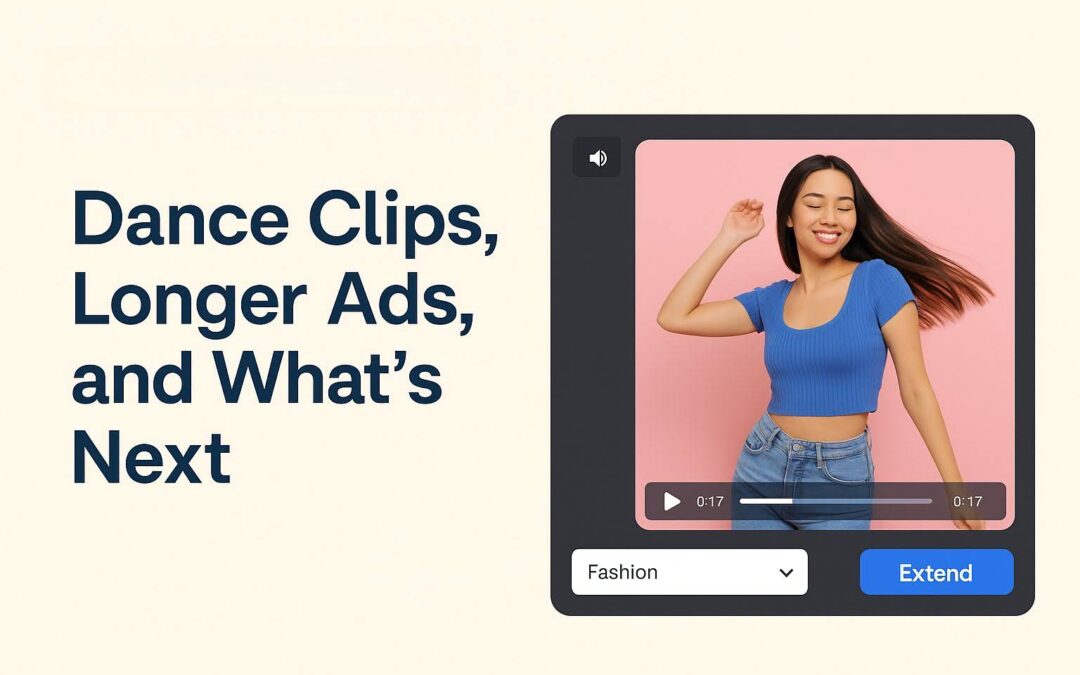

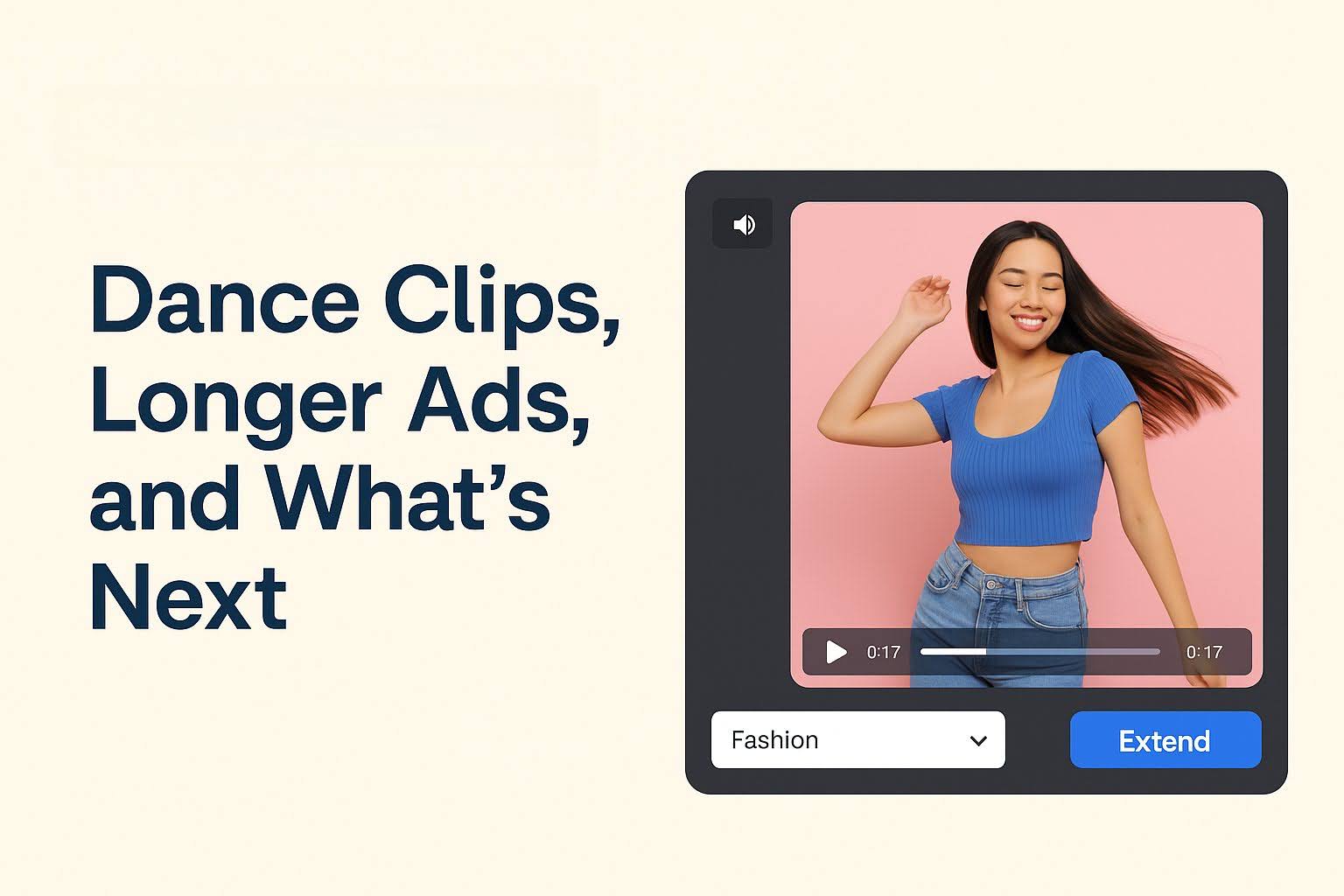

1. The “Scroll-Stopper” Ad

Imagine you are selling a waterproof watch. A photo of the watch in water is nice.

But imagine an ad where the watch is submerged, and the water is rippling around it, bubbles rising slowly to the surface, catching the light. You haven’t just shown the product; you’ve demonstrated its environment. You’ve created a vibe. This is how you lower your Cost Per Click (CPC).

2. The Atmospheric Storyboard

Writers and filmmakers are using this to pitch ideas. Instead of showing a static storyboard, they are showing “mood films.” A dark alleyway with fog rolling in. A cyberpunk city with flying cars zooming past. It helps the audience feel the story before a single frame is filmed.

3. The Living Portrait

This is for the sentimentalists. We all have photos of people we miss. Animating a smile, a blink, or a gentle nod can turn a flat image into a moment of connection. It’s not about replacing the memory; it’s about enhancing the nostalgia.

Mastering the Prompt: A Quick Guide

The secret sauce to getting Hollywood-level results is in the prompt. Since Sora 2 is a language model, you need to speak to it clearly.

The Formula: Subject + Action + Atmosphere

- Weak Prompt: “Move the clouds.”

- Strong Prompt: “Cumulus clouds drifting slowly across a deep blue sky, casting moving shadows on the green hills below, cinematic lighting.“

- Weak Prompt: “Make the car drive.”

- Strong Prompt: “Vintage red sports car driving down a coastal highway, wheels spinning, dust kicking up from the tires, sunset lighting.“

The more specific you are about the atmosphere, the better Veo 3.1 can render the lighting and physics.

Step Into the Future of Content

We are transitioning from the “Information Age” to the “Experience Age.” People don’t just want to see things; they want to experience them.

Static images are windows—you look at them.

Animated videos are doors—you walk through them.

With the power of Sora 2 and Veo 3.1, you no longer need a key to open that door. You just need your imagination. The technology has democratized high-end visual effects, making them accessible to anyone with a browser.

Conclusion

Don’t let your best content die in the camera roll. Don’t let your brand get lost in the static noise of the internet.

Take your favorite photo. Give it breath. Give it motion. Give it life.

The world is moving. It’s time your photos caught up.